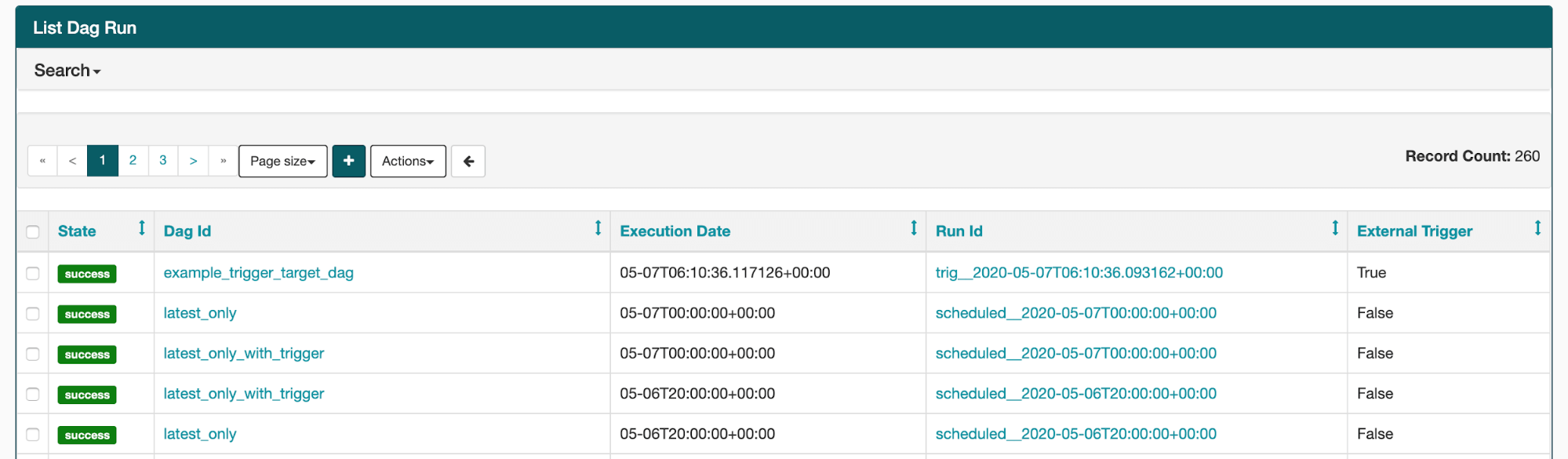

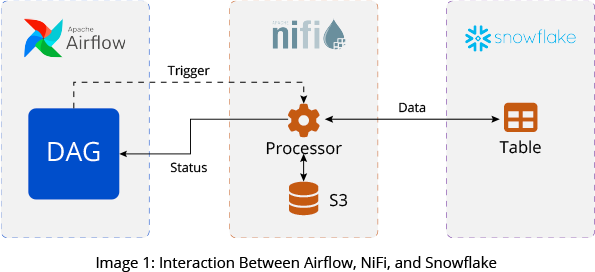

Create streaming jobs to ingest raw orders and sales data into the staging tables.ĬREATE SYNC JOB load_orders_raw_data_from_s3 Create empty tables to use as staging for orders.ĬREATE TABLE default_glue_catalog.database_a137bd.orders_raw_data()ĬREATE TABLE default_glue_catalog.database_a137bd.sales_info_raw_data() Run the following code in SQLake /* Ingest data */ĬREATE S3 CONNECTION airflow_alternative_pipelines_samplesĪWS_ROLE = 'arn:aws:iam::949275490180:role/samples_role'ĮXTERNAL_ID = 'AIRFLOW_ALTERNATIVE_SAMPLES' Here is a code example of joining multiple S3 data sources into SQLake and applying simple enrichments to the data. The compute cluster scales up and down automatically, simplifying the deployment and management of your data pipelines.There is no need for scheduling or orchestration.Jobs are executed once and continue to run until stopped.Process pipelines for batch and streaming data, using familiar SQL syntax.Build reliable, maintainable, and testable data ingestion.SQLake is a good alternative that enables the automation of data pipeline orchestration. Alternative Approach – Automated OrchestrationĪlthough Airflow is a valuable tool, it can be challenging to troubleshoot. It could be related to the specific version of Airflow you are using, or there may be problems with your DAG code or the dependencies it uses. If you are still experiencing problems with the scheduler not triggering DAGs at the scheduled time, other issues may be at play. You may want to check the logs or try restarting the webserver and scheduler again to see if that resolves the issue. In that case, it could be due to a problem with the configuration or connectivity between the two. Suppose you are experiencing the issue where the DAG only executes once after restarting the webserver and scheduler. Airflow Webserver and Scheduler MisconfigurationĪnother possible issue could be with the configuration of the Airflow webserver and scheduler. It is generally recommended to use static start dates to have more control over when the DAG is run, especially if you need to re-run jobs or backfill data. To solve this problem, you can either hard-code a static start date for the DAG or make sure that the dynamic start date is far enough in the past so that it is before the interval between executions. dag = DAG( 'run_job', default_args=default_args, catchup=False, ) This means that the first run of the DAG will be after the first interval rather than at the scheduled time. However, Airflow runs jobs at the end of an interval, not the beginning. In the provided code, the start date is set to the current date using the time module. One possible reason for this issue is the start date of the DAG. Airflow Runs Jobs at The End of An Interval Some common reason DAG Not Triggered at Scheduled Time are: 1.

This article will provide examples of why DAGs may not be triggered, how to fix this issue, and introduce a tool called SQLake for simplifying data pipeline orchestration. It can be frustrating when the scheduler fails to trigger DAGs to run at the scheduled time, disrupting your workflows.

Alternative Approach – Automated Orchestration.

Airflow Webserver and Scheduler Misconfiguration Some common reason DAG Not Triggered at Scheduled Time are:.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed